Never invest your time in learning complex things. - Shekhar - Medium

Extracto

The data scientist hype train has come to a grinding halt . It has been a joy ride for me for I was one of the people who got hooked into data science as soon as it came out. Math, engineering and…

Resumen

Resumen Principal

El artículo aborda el declive de la fiebre de los científicos de datos, un fenómeno que hace apenas unos años parecía imparable. El autor, Shekhar, quien se identifica como un participante activo en esta tendencia, reflexiona sobre su experiencia personal dentro del campo, destacando cómo la combinación de matemáticas, ingeniería y análisis de datos atrajo a muchos profesionales. Sin embargo, el entusiasmo inicial ha dado paso a una realización más crítica sobre la naturaleza del rol y su valor real en el mercado. El título del artículo sugiere una crítica a la inversión excesiva de tiempo en aprender temas complejos que, según el autor, pueden no ser sostenibles o rentables a largo plazo. Este cambio de perspectiva refleja una evolución en la percepción pública del rol de científico de datos, pasando de ser una profesión altamente valorada a una que enfrenta cuestionamientos sobre su relevancia y demanda real. El artículo invita a reconsiderar las expectativas y estrategias profesionales en un entorno tecnológico en constante cambio.

Elementos Clave

- Declive del hype de ciencia de datos: El entusiasmo inicial por la profesión de científico de datos ha disminuido considerablemente, sugiriendo una saturación del mercado o una sobrevaloración previa de sus capacidades.

- Experiencia personal del autor: Shekhar comparte su trayectoria dentro del campo, lo que aporta una perspectiva interna y testimonio directo sobre la evolución del rol y las expectativas asociadas.

- Crítica a la complejidad técnica: El título del artículo propone una visión crítica sobre invertir tiempo en aprender temas complejos, lo que puede interpretarse como una advertencia sobre la sobreingeniería o especialización excesiva en áreas que pueden volverse obsoletas.

- Reevaluación profesional: El texto implica una necesidad de replantear estrategias de aprendizaje y desarrollo profesional, priorizando habilidades más adaptables y sostenibles en lugar de especializaciones rígidas.

Análisis e Implicaciones

Este artículo refleja una transición importante en el panorama tecnológico, donde las profesiones una vez consideradas de elite comienzan a ser vistas con mayor escepticismo. La crítica a la inversión en conocimientos complejos sugiere una necesidad de enfoques más pragmáticos en la educación y el desarrollo profesional. Además, puede tener implicaciones en cómo las empresas y los individuos evalúan el retorno de inversión de sus esfuerzos de capacitación técnica.

Contexto Adicional

El análisis del autor se enmarca en una época donde la automatización y las herramientas low-code están transformando roles técnicos tradicionales. Esto refuerza la idea de que la adaptabilidad y la relevancia práctica pueden superar la mera complejidad técnica en el entorno actual.

Contenido

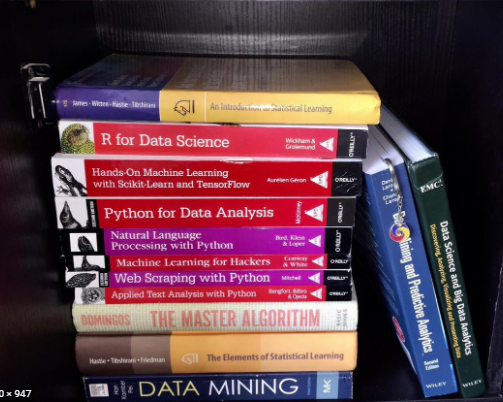

The data scientist hype train has come to a grinding halt . It has been a joy ride for me for I was one of the people who got hooked into data science as soon as it came out. Math, engineering and the ability to predict stuff was very attractive indeed for a self-professed geek . I couldn’t resist and soon I was devouring one book after the other. I started with Springer Publications (Max Kuhn) , Tevor Hastie, a lot of Orielly books and followed it up with Statistics and Math courses until I had the math and the techniques (Linear/Logistic Regression, SVM,Random Forests, Decision Trees and few 20 others) down pat. Sounds great right, not quite.

Then came the Deep Learning revolution. I was first exposed to it thanks to Jeremy Howard who in my opinion still runs the best damn Deep learning course on the internet. He explains vision, NLP and even structured data machine learning. The guy is literally able to translate gobbledygook for the masses ( Me :-)) Plug: https://www.fast.ai/ .

I started to put my knowledge to work and quickly came to a few realizations.

Exploring the data is at the heart of Data science. That’s what gets a data scientist paid.

Labeling the data is an art. Most of the time the data wont be labeled or your company would be too stingy to pay manual labelers to do it for you. (Amazon ground Truth for example)

Most of the data in your company will be structured data and not text or vision data.

Most of the problems that people think they need a machine learning model for can be solved without it.

The value of the model depends on how you present the results and not on how it performs.

Soon I became an expert in engineering the data, exploring the data and began to predict futures (kidding). I was over the moon. The field itself was growing ,every topic on the web was on Data science and soon it made its way to Javascript following Atwood’s Law.[4]

It was too good to be true

My feeling soon went downhill .I saw a shiny toy release back then called AutoML. Then it dawned on me. The pride that I had experienced in engineering the data, calling a few libraries and predicting the results had been commoditized by Google and the cloud giants. Microsoft, Google had released SOTA pre-trained models in vision, NLP and even structured data and made them available via a click based interface which trains the model and gives you back an API endpoint to call. [1]

Frantically, I began to search over the internet “ Is Data Science really Dying?”.

It wasn’t that simple. I got conflicting answers. There were people who stuck to their guns that AutoML wont work for structured data or recommendation engines or time series data[3]. Also that the job of a Data scientist is simplified and not made obsolete as he is now free to concentrate on getting value from the data.

Well the last statement is true, but they were missing one important point.

The most glamorous part of a Data Scientist job was training the model. It wasn’t engineering the data which is essentially 90% of the Data scientist’s unsaid job description. In fact most of the courses on Data science trained people on fitting models rather than sifting through the data. Soon the complaints started to pour in over the internet. People trained in Math and Statistics came to realize that their job is only to munge data for most of the time.

There were a few heroes left. People who clung on to the notion that we still need to solve for drift, fairness, privacy, synthetic data and a whole lot of things (which are not yet automated ) [5] ;which they began to realize were problems only after their glam work had been taken over. How soon before we have these as automl libraries or as knobs in the AutoML pipeline.

There were also others who began to indicate that there was a certain kind of magic in training and deploying machine learning models which requires a specialized skill set (MLOps). Personally ,I never understood why you would not deploy your model using something like AWS Step Functions. Anyways Google’s Vertex AI commoditizes MLOps now.

Is this true only for Data Science?

Nope. Google open sourced Kubernetes but very few companies aside from those who have regulatory concerns or a few others who are drunk would actually use it. Most would either use a Managed Kubernetes solution or a PAAS or even serverless platforms.[2] .

There was a larger fundamental problem

Most of the people who did Data Science were not scientists. In fact the definition of a data scientist itself was so hazy that I couldn’t differentiate Data analysts from Data Scientists. There were running jokes that QAs/Devs who found an issues with the data should be tagged as data scientists.

The second problem was most of the data scientists were actually a new breed of data engineers. People who would painstakingly compile a data set to make sure it has no bugs before training it. Call them Data Engineers ++ or Data Analysts++. (A symbol in a job title. How cool).

Was data really the new Oil?

Despite my ranting, I agree on this one. Google bought Kaggle for its datasets and not because it wasn’t evil. The volume of data that google or Facebook has over the years has allowed it to build state of the art models.

What about new models being developed daily?

These are only from R&D departments of FAANG or companies who have acquired Data oil and want to use it for developing their models. Note, unless the type of prediction is novel, most of the models being discovered are only incremental improvements. These guys should be called Data/Model Scientists to distinguish them from Data Engineers ++.

Can software engineers do data science.

Contrary to what most people think, Data science requires a different mode of thinking than Software Engineering. Software engineering is kind of Type 1 + Type 2 thinking and Data science is mostly only Type 2. Your thinking tends to slow down. I could do both and I don’t think of myself as special. So you guys could as well.

So can you be a data scientist at the top.?

Sadly even after a lot of top researchers in the field echoing that a Math degree is not relevant or needed(Jeremy Howard for one) and the field is application oriented, people still look at hiring their top data scientists with a masters or a PHD in Math and statistics.

So what’s the conclusion? Never learn complex things? Not quite.

There is still a huge demand for novel skills when something as complex as machine leaning hits the market. My two cents is spend it on learning and educating others and buy your condo from the teaching job.

See you after I finish learning Quantum learning.

[1] The cloud providers weren’t the only ones on the automl bandwagon. In fact there were other platforms like H2o ai and Data Robot who were set to democratize (read dumbify) machine learning. Google also introduced automated hyperparameter selection through Neural architecture search.

[2] I have seen people vociferously argue in favor of container orchestration solutions even when PAAS and FAAS solutions are far more easier to use. Cold starts being one of their arguments. In my defense, cold start has already been solved with AWS Lambda with provisioned concurrency and even with PAAS’ like Cloud Run

[3] This has been disproved. Amazon’s Deep learning recommendation models work far better than the staple collaboration, content based and matrix factorization models. Also the model measure itself and self learns. Both Amazon and Google have SOTA Time series models.

[4] Any application that can be written in JavaScript, will eventually be written in JavaScript

[5] Courting controversy.

Fuente: Medium